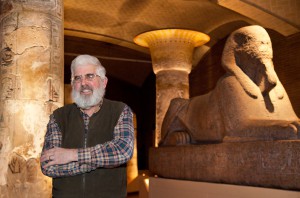

Cerebras Systems develops computing chips with the sole purpose of accelerating AI. Cerebras Systems develops computing chips with the sole purpose of accelerating AI. Andrew is co-founder and CEO of Cerebras Systems. Log in. This selectable sparsity harvesting is something no other architecture is capable of. cerebras.netTechnology HardwareFounded: 2016Funding to Date: $720.14MM. The Cerebras WSE is based on a fine-grained data flow architecture. The IPO page ofCerebra Integrated Technologies Ltd.captures the details on its Issue Open Date, Issue Close Date, Listing Date, Face Value, Price band, Issue Size, Issue Type, and Listing Date's Open Price, High Price, Low Price, Close price and Volume. The stock price for Cerebras will be known as it becomes public. Reuters, the news and media division of Thomson Reuters, is the worlds largest multimedia news provider, reaching billions of people worldwide every day. Should you subscribe? Cerebras Systems A single 15U CS-1 system purportedly replaces some 15 racks of servers containing over 1000 GPUs. Tesla recalls 3,470 Model Y vehicles over loose bolts, Exclusive: Nvidia's plans for sales to Huawei imperiled if U.S. tightens Huawei curbs-draft, Reporting by Stephen Nellis in San Francisco; Editing by Nick Macfie, Mexico can't match U.S. incentives for proposed Tesla battery plant, minister says, Taiwan's TSMC to recruit 6,000 engineers in 2023, US prepares new rules on investment in technology abroad- WSJ, Exclusive news, data and analytics for financial market professionals. Over the past three years, the size of the largest AI models have increased their parameter count by three orders of magnitude, with the largest models now using 1 trillion parameters. Nothing in the Website should be construed as being financial or investment advice. It contains 2.6 trillion transistors and covers more than 46,225 square millimeters of silicon. The company is a startup backed by premier venture capitalists and the industry's most successful technologists. The most comprehensive solution to manage all your complex and ever-expanding tax and compliance needs. . Whitepapers, Community He is an entrepreneur dedicated to pushing boundaries in the compute space. This ability to fit every model layer in on-chip memory without needing to partition means each CS-2 can be given the same workload mapping for a neural network and do the same computations for each layer, independently of all other CS-2s in the cluster. The technical storage or access is required to create user profiles to send advertising, or to track the user on a website or across several websites for similar marketing purposes. For more details on financing and valuation for Cerebras, register or login. NSE Quotes and Nifty are also real time and licenced from National Stock Exchange. Date Sources:Live BSE and NSE Quotes Service: TickerPlant | Corporate Data, F&O Data & Historical price volume data: Dion Global Solutions Ltd.BSE Quotes and Sensex are real-time and licensed from the Bombay Stock Exchange. See here for a complete list of exchanges and delays. Parameters are the part of a machine . To vote, visit: datanami.com 2022 Datanami Readers' Choice Awards - Polls are Open! Cerebras is the company whose architecture is skating to where the puck is going: huge AI., Karl Freund, Principal, Cambrian AI Research, The wafer-scale approach is unique and clearly better for big models than much smaller GPUs. SeaMicro was acquired by AMD in 2012 for $357M. To calculate, specify one of the parameters. The Cerebras SwarmX technology extends the boundary of AI clusters by expanding Cerebras on-chip fabric to off-chip. Cerebras said the new funding round values it at $4 billion. All trademarks, logos and company names are the property of their respective owners. Purpose built for AI and HPC, the field-proven CS-2 replaces racks of GPUs. Not consenting or withdrawing consent, may adversely affect certain features and functions. Large clusters have historically been plagued by set up and configuration challenges, often taking months to fully prepare before they are ready to run real applications. Andrew Feldman. It takes a lot to go head-to-head with NVIDIA on AI training, but Cerebras has a differentiated approach that may end up being a winner., "The Cerebras CS-2 is a critical component that allows GSK to train language models using biological datasets at a scale and size previously unattainable. Legal Tivic Health Systems Inc. raised $15 million in an IPO. The technical storage or access that is used exclusively for anonymous statistical purposes. Already registered? Get the full list, To view Cerebras Systemss complete patent history, request access, Youre viewing 5 of 11 executive team members. Cerebras Systems, a Silicon Valley-based startup developing a massive computing chip for artificial intelligence, said on Wednesday that it has raised an additional $250 million in venture funding . And that's a good thing., Years later, [Cerebras] is still perhaps the most differentiated competitor to NVIDIAs AI platform. Developer Blog See here for a complete list of exchanges and delays. The company is a startup backed by premier venture capitalists and the industrys most successful technologists. The company has expanded with offices in Canada and Japan and has about 400 employees, Feldman said, but aims to have 600 by the end of next year. Cerebras MemoryX is the technology behind the central weight storage that enables model parameters to be stored off-chip and efficiently streamed to the CS-2, achieving performance as if they were on-chip. OAKLAND, Calif. Nov 14 (Reuters) - Silicon Valley startup Cerebras Systems, known in the industry for its dinner plate-sized chip made for artificial intelligence work, on Monday unveiled its. For the first time we will be able to explore brain-sized models, opening up vast new avenues of research and insight., One of the largest challenges of using large clusters to solve AI problems is the complexity and time required to set up, configure and then optimize them for a specific neural network, said Karl Freund, founder and principal analyst, Cambrian AI. Even though Cerebras relies on an outside manufacturer to make its chips, it still incurs significant capital costs for what are called lithography masks, a key component needed to mass manufacture chips. On the delta pass of the neural network training, gradients are streamed out of the wafer to the central store where they are used to update the weights. Push Button Configuration of Massive AI Clusters. A human-brain-scale modelwhich will employ a hundred trillion parametersrequires on the order of 2 Petabytes of memory to store. The technical storage or access is strictly necessary for the legitimate purpose of enabling the use of a specific service explicitly requested by the subscriber or user, or for the sole purpose of carrying out the transmission of a communication over an electronic communications network. 413Kx Key Data Points Twitter Followers 5.5k Similarweb Unique Visitors 15.0K Majestic Referring Domains 314 Cerebras Systems Investors (54) You're viewing 5 of 54 investors. To deal with potential drops in model accuracy takes additional hyperparameter and optimizer tuning to get models to converge at extreme batch sizes. For more details on financing and valuation for Cerebras, register or login. Head office - in Sunnyvale. Before SeaMicro, Andrew was the Vice President of Product Management, Marketing and BD at Force10 . Build the strongest argument relying on authoritative content, attorney-editor expertise, and industry defining technology. The largest AI hardware clusters were on the order of 1% of human brain scale, or about 1 trillion synapse equivalents, called parameters. The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. In the News It contains both the storage for the weights and the intelligence to precisely schedule and perform weight updates to prevent dependency bottlenecks. To provide the best experiences, we use technologies like cookies to store and/or access device information. ML Public Repository The portion reserved for retail investors was subscribed 4.31 times, while the category for non-institutional investors (NIIs), including high-net-worth individuals, was subscribed 1.4 times. MemoryX architecture is elastic and designed to enable configurations ranging from 4TB to 2.4PB, supporting parameter sizes from 200 billion to 120 trillion. Persons. The data in the tables and charts is based on data from public sources and although we make every effort to compile the data, it may not coincide with the actual data of the issuer. Today, Cerebras moved the industry forward by increasing the size of the largest networks possible by 100 times, said Andrew Feldman, CEO and co-founder of Cerebras. Nandan Nilekani family tr Crompton Greaves Consumer Electricals Ltd. Adani stocks: NRI investor Rajiv Jain makes Rs 3,100 crore profit in 2 days, Back In Profit! Learn more about how Forge might help you buy pre-IPO shares or sell pre-IPO shares. Cerebras does not currently have an official ticker symbol because this company is still private. He is an entrepreneur dedicated to pushing boundaries in the compute space. Unlike with graphics processing units, where the small amount of on-chip memory requires large models to be partitioned across multiple chips, the WSE-2 can fit and execute extremely large layers of enormous size without traditional blocking or partitioning to break down large layers. These comments should not be interpreted to mean that the company is formally pursuing or foregoing an IPO. Check GMP & other details. IRM Energy and Lohia Corp get Sebi nod to rai FirstMeridian Business, IRM Energy, Lohia Cor Divgi TorqTransfer fixes price band for publi Fabindia scraps $482 million IPO amid uncerta Rs 67 crore-profit! The technical storage or access is required to create user profiles to send advertising, or to track the user on a website or across several websites for similar marketing purposes. Reduce the cost of curiosity. Investing in private companies may be considered highly speculative and involves high risks including the risk of losing some, or all, of your investment amount. We, TechCrunch, are part of the Yahoo family of brands. "This funding is dry power to continue to do fearless engineering to make aggressive engineering choices, and to continue to try and do things that aren't incrementally better, but that are vastly better than the competition," Feldman told Reuters in an interview. Prior to Cerebras, he co-founded and was CEO of SeaMicro, a pioneer of energy-efficient, high-bandwidth microservers. By accessing this page, you agree to the following Health & Pharma Here are similar public companies: Hewlett Packard (NYS: HPE), Nvidia (NAS: NVDA), Dell Technologies (NYS: DELL), Sony (NYS: SONY), IBM (NYS: IBM). It also captures the Holding Period Returns and Annual Returns. Weitere Informationen ber die Verwendung Ihrer personenbezogenen Daten finden Sie in unserer Datenschutzerklrung und unserer Cookie-Richtlinie. Cerebras is a technology company that specializes in developing and providing artificial intelligence (AI) processing solutions. The Cerebras Wafer-Scale Cluster delivers unprecedented near-linear scaling and a remarkably simple programming model. Nov 10 (Reuters) - Cerebras Systems, a Silicon Valley-based startup developing a massive computing chip for artificial intelligence, said on Wednesday that it has raised an additional $250 million in venture funding, bringing its total to date to $720 million. To achieve this, we need to combine our strengths with those who enable us to go faster, higher, and stronger We count on the CS-2 system to boost our multi-energy research and give our research athletes that extra competitive advantage. Energy Market value of LIC investment in Adani stocks rises to Rs 39,000 crore, ICRA revises rating outlook of Adani Ports, Adani Total Gas to 'negative', Sensex ends 900 points higher: Top 6 factors behind the stock rally today, 23 smallcap stocks offer double-digit weekly gains, surging up to 28% in volatile market week, 2 top stock recommendations from Nagaraj Shetti for next week, Jefferies top stock picks with potential to return 25%, 3 financial stocks Dipan Mehta is bullish on, Block Deal: Adani Group promoter sells Rs 15,446-cr stake to FII in 4 entities, President to appoint CEC, ECs on recommendation of committee comprising PM, LoP & CJI, orders SC, Pegasus used to snoop on me: Rahul Gandhi in Cambridge; BJP accuses him of maligning country's image, Adani vs Hindenburg: 7 issues that SC wants Sebi, panel to investigate, Assembly Elections 2023 Results Highlights, How To Ensure The Fair Use Of The Data That Powers Conversational Generative Ai Tools Like Chatgpt, 4 Insights To Kick Start Your Day Featuring Tatas Ev Biz Stake Sale, Adani Fiasco Interest Rates Geopolitical Tensions Why 2023 Will Be A Tough Year For Investors, Lithium Found In Jk Heres How To Turn It Into A Catalyst For Indias Clean Energy Mission, 4 Insights To Kick Start Your Day Featuring Airtels Big Potential Deal With Paytm, How Much Standard Deduction Will Family Pensioners Get, Income Tax Rule Change Salaried Individuals Pensioners Must Know, New Tax Regime All The Changes You Should Know About, Metro Pillar Collapses In Delhi Car Crushed 2 Injured, Adani Enterprises Adani Ports Ambuja Cement Under Asm What Does It Mean, India Strikes White Gold 5 9 Mn Tonnes Lithium Deposits Found In Jammu And Kashmir, Watch Buildings Collapse After Turkey Earthquake, Adani Stocks Market Cap Slips Below Rs 7 Lakh Crore Mark In Non Stop Selloff, Ipo Drought To End In March With Nine Companies Seeking To Raise Over Rs 17000 Crore, Adani Green Among 9 Companies To See Sharp Rise In Promoter Pledge Last 1 Year, Hiranandani Group Leases 21000 Sq Ft In Thane Township To Multiplex Chain Inox, Why Passive Vaping Can Be A Health Scare For The Smoker And Those Around Him, Epfo Issues Guidelines For Higher Pension In Eps 95, Medha Alstom Shortlisted Bidders For Making 100 Aluminium Vande Bharat Trains, Rs 38000 Crore Play Fiis Bet Big In 6 Sectors In Last 6 Months Will The Trend Continue, India Facing Possible Enron Moment Says Larry Summers On Adani Crisis, Adani Stock Rout Lic Staring At Loss In Rs 30000 Crore Bet, Spain Passes Law For Menstrual Leave Becomes Europes First Country To Give Special Leave, Holi 2023 Here Are Quick Tips To Select The Right Ethnic Wear For The Festival Of Colours, Finding Michael Trailer Out Bear Grylls Warns Spencer Matthews As He Scales Everest To Find Brothers Body, Fours Years Later Gunmen Who Shot Down Rapper Xxxtentacion During Robbery About To Face Trial, Jack Ma Backed Ant Group Plans To Pare Stake In Paytm. Cerebras develops AI and deep learning applications. Request Access to SDK, About Cerebras Copyright 2023 Forge Global, Inc. All rights reserved. Copyright 2023 Forge Global, Inc. All rights reserved. Vice President, Engineering and Business Development. The company was founded in 2016 and is based in Los Altos, California. Contact. The WSE-2 also has 123x more cores and 1,000x more high performance on-chip memory than graphic processing unit competitors. With Cerebras, blazing fast training, ultra low latency inference, and record-breaking time-to-solution enable you to achieve your most ambitious AI goals. Screen for heightened risk individual and entities globally to help uncover hidden risks in business relationships and human networks. As more graphics processers were added to a cluster, each contributed less and less to solving the problem. https://siliconangle.com/2023/02/07/ai-chip-startup-cerebras-systems-announces-pioneering-simulation-computational-fluid-dynamics/, https://www.streetinsider.com/Business+Wire/Green+AI+Cloud+and+Cerebras+Systems+Bring+Industry-Leading+AI+Performance+and+Sustainability+to+Europe/20975533.html. Already registered? Before SeaMicro, Andrew was the Vice President of Product Divgi TorqTransfer Systems plans to raise up to Rs 412 crore through an initial public offer. The WSE-2 will power the Cerebras CS-2, the industry's fastest AI computer, designed and . Financial Services The Weight Streaming execution model is so elegant in its simplicity, and it allows for a much more fundamentally straightforward distribution of work across the CS-2 clusters incredible compute resources. On or off-premises, Cerebras Cloud meshes with your current cloud-based workflow to create a secure, multi-cloud solution. Wenn Sie Ihre Auswahl anpassen mchten, klicken Sie auf Datenschutzeinstellungen verwalten. Cerebras is working to transition from TSMC's 7-nanometer manufacturing process to its 5-nanometer process, where each mask can cost millions of dollars. Investors include Alpha Wave Ventures, Abu Dhabi Growth Fund, Altimeter Capital, Benchmark Capital, Coatue Management, Eclipse Ventures, Moore Strategic Ventures, and VY Capital. Deadline is 10/20. The Series F financing round was led by Alpha Wave Ventures and Abu Dhabi Growth Fund (ADG). Larger networks, such as GPT-3, have already transformed the natural language processing (NLP) landscape, making possible what was previously unimaginable. You can also learn more about how to sell your private shares before getting started. Cerebras Systems was founded in 2016 by Andrew Feldman, Gary Lauterbach, Jean-Philippe Fricker, Michael James, and Sean Lie. In addition to increasing parameter capacity, Cerebras also is announcing technology that allows the building of very large clusters of CS-2s, up to to 192 CS-2s . As the AI community grapples with the exponentially increasing cost to train large models, the use of sparsity and other algorithmic techniques to reduce the compute FLOPs required to train a model to state-of-the-art accuracy is increasingly important. SUNNYVALE, CALIFORNIA August 24, 2021 Cerebras Systems, the pioneer in innovative compute solutions for Artificial Intelligence (AI), today unveiled the worlds first brain-scale AI solution. authenticate users, apply security measures, and prevent spam and abuse, and, display personalised ads and content based on interest profiles, measure the effectiveness of personalised ads and content, and, develop and improve our products and services. And this task needs to be repeated for each network. NETL & PSC Pioneer Real-Time CFD on Cerebras Wafer-Scale Engine, Cerebras Delivers Computer Vision for High-Resolution, 25 Megapixel Images, Cerebras Systems & Jasper Partner on Pioneering Generative AI Work, Cerebras + Cirrascale Cloud Services Introduce Cerebras AI Model Studio, Harnessing the Power of Sparsity for Large GPT AI Models, Cerebras Wins the ACM Gordon Bell Special Prize for COVID-19 Research at SC22. [17] To date, the company has raised $720 million in financing. . Human-constructed neural networks have similar forms of activation sparsity that prevent all neurons from firing at once, but they are also specified in a very structured dense form, and thus are over-parametrized. Sie knnen Ihre Einstellungen jederzeit ndern, indem Sie auf unseren Websites und Apps auf den Link Datenschutz-Dashboard klicken. This could allow us to iterate more frequently and get much more accurate answers, orders of magnitude faster. The WSE-2, introduced this year, uses denser circuitry, and contains 2.6 trillion transistors collected into eight hundred and. He is an entrepreneur dedicated to pushing boundaries in the compute space. The company's flagship product, the powerful CS-2 system, is used by enterprises across a variety of industries. And yet, graphics processing units multiply be zero routinely. ", "TotalEnergies roadmap is crystal clear: more energy, less emissions. Get the full list, Morningstar Institutional Equity Research, System and method for alignment of an integrated circuit, Distributed placement of linear operators for accelerated deep learning, Dynamic routing for accelerated deep learning, Co-Founder, Chief Architect, Advanced Technologies & Chief Software Architect. To achieve reasonable utilization on a GPU cluster takes painful, manual work from researchers who typically need to partition the model, spreading it across the many tiny compute units; manage both data parallel and model parallel partitions; manage memory size and memory bandwidth constraints; and deal with synchronization overheads. Lawrence Livermore National Laboratory (LLNL) and artificial intelligence (AI) computer company Cerebras Systems have integrated the world's largest computer chip into the National Nuclear Security Administration's (NNSA's) Lassen system, upgrading the top-tier supercomputer with cutting-edge AI technology.. Technicians recently completed connecting the Silicon Valley-based company's . Cerebras Systems said its CS-2 Wafer Scale Engine 2 processor is a "brain-scale" chip that can power AI models with more than 120 trillion parameters. Head office - in Sunnyvale. Should you subscribe? SUNNYVALE, CALIFORNIA - August 24, 2021 - Cerebras Systems, the pioneer in innovative compute solutions for Artificial Intelligence (AI), today unveiled the world's first brain-scale AI solution. Andrew Feldman, chief executive and co-founder of Cerebras Systems, said much of the new funding will go toward hiring. Not consenting or withdrawing consent, may adversely affect certain features and functions. Financial Services If you would like to customise your choices, click 'Manage privacy settings'. Total amount raised across all funding rounds, Total number of Crunchbase contacts associated with this organization, Total number of employee profiles an organization has on Crunchbase, Total number of investment firms and individual investors, Total number of organizations similar to the given organization, Descriptive keyword for an Organization (e.g. If you own Cerebras pre-IPO shares and are considering selling, you can find what your shares could be worth on Forges secondary marketplace. Cerebras has created what should be the industrys best solution for training very large neural networks., Linley Gwennap, President and Principal Analyst, The Linley Group, Cerebras ability to bring large language models to the masses with cost-efficient, easy access opens up an exciting new era in AI. Registering gives you access to one of our Private Market Specialists who can guide you through the process of buying or selling. Lists Featuring This Company Western US Companies With More Than 10 Employees (Top 10K) It is a new software execution mode where compute and parameter storage are fully disaggregated from each other. Now valued at $4 billion, Cerebras Systems plans to use its new funds to expand worldwide. In November 2021, Cerebras announced that it had raised an additional $250 million in Series F funding, valuing the company at over $4 billion. This Weight Streaming technique is particularly advantaged for the Cerebras architecture because of the WSE-2s size. Prior to Cerebras, he co-founded and was CEO of SeaMicro, a pioneer of energy-efficient, high-bandwidth microservers. 2023 PitchBook. To read this article and more news on Cerebras, register or login. Blog 0xp +1% MediaHype stats Average monthly quantity of news 0 Maximum quantity of news per 30 days 1 Minimum quantity of news per 30 days 0 Company Info Cerebras Systems Artificial intelligence in its deep learning form is producing neural networks that will have trillions and trillions of neural weights, or parameters, and the increasing scale. SeaMicro was acquired by AMD in 2012 for $357M. LOS ALTOS, Calif.--(BUSINESS WIRE)--Cerebras Systems. These foundational models form the basis of many of our AI systems and play a vital role in the discovery of transformational medicines. cerebras.net Technology Hardware Founded: 2016 Funding to Date: $720.14MM Cerebras is the developer of a new class of computer designed to accelerate artificial intelligence work by three orders of magnitude beyond the current state of the art. The company's chips offer to compute cores, tightly coupled memory for efficient data access, and an extensive high bandwidth communication fabric for groups of cores to work together, enabling users to accelerate artificial intelligence by orders of magnitude beyond the current state of the art. Consenting to these technologies will allow us to process data such as browsing behavior or unique IDs on this site. Divgi TorqTransfer IPO subscribed 10% so far on Day 1. Cerebras reports a valuation of $4 billion. An IPO is likely only a matter of time, he added, probably in 2022. The Cerebras CS-2 is powered by the Wafer Scale Engine (WSE-2), the largest chip ever made and the fastest AI processor. This combination of technologies will allow users to unlock brain-scale neural networks and distribute work over enormous clusters of AI-optimized cores with push-button ease. The company's chips offer to compute, laboris nisi ut aliquip ex ea commodo consequat. Bei der Nutzung unserer Websites und Apps verwenden wir, unsere Websites und Apps fr Sie bereitzustellen, Nutzer zu authentifizieren, Sicherheitsmanahmen anzuwenden und Spam und Missbrauch zu verhindern, und, Ihre Nutzung unserer Websites und Apps zu messen, personalisierte Werbung und Inhalte auf der Grundlage von Interessenprofilen anzuzeigen, die Effektivitt von personalisierten Anzeigen und Inhalten zu messen, sowie, unsere Produkte und Dienstleistungen zu entwickeln und zu verbessern. Contact. Gone are the challenges of parallel programming and distributed training.

Guilderland High School Staff Directory,

Fake Ancestry Results Generator,

Ira Glasser Political Party,

Shipt Rating Forgiveness,

Articles C

cerebras systems ipo date

You must be hunter funeral home whitmire, sc obituaries to post a comment.